cameras

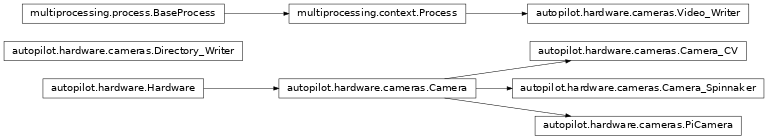

Classes:

|

Metaclass for Camera objects. |

|

Interface to the Raspberry Pi Camera Module via picamera |

|

Capture Video from a webcam with OpenCV |

|

Capture video from a FLIR brand camera with the Spinnaker SDK. |

|

Encode frames as they are acquired in a separate process. |

Functions:

List all available Spinnaker cameras and their |

- OPENCV_LAST_INIT_TIME = <Synchronized wrapper for c_double(0.0)>

Time the last OpenCV camera was initialized (seconds, from time.time()).

v4l2 has an extraordinarily obnoxious …feature – if you try to initialize two cameras at ~the same time, you will get a neverending stream of informative error messages:

VIDIOC_QBUF: Invalid argumentThe workaround seems to be relatively simple, we just wait ~2 seconds if another camera was just initialized.

- class Camera(fps=None, timed=False, crop=None, rotate: int = 0, **kwargs)[source]

Bases:

autopilot.hardware.HardwareMetaclass for Camera objects. Should not be instantiated on its own.

- Parameters

fps (int) – Framerate of video capture

timed (bool, int, float) – If False (default), camera captures indefinitely. If int or float, captures for this many seconds

rotate (int) – Number of times to rotate image clockwise (default 0). Note that image rotation should happen in

_grab()or be otherwise implemented in each camera subclass, because it’s a common enough operation many cameras have some optimized way of doing it.**kwargs –

Arguments to

stream(),write(), andqueue()can be passed as dictionaries, eg.:stream={'to':'T', 'ip':'localhost'}

When the camera is instantiated and

capture()is called, the class uses a series of methods that should be overwritten in subclasses. Further details for each can be found in the relevant method documentation.It is highly recommended to instantiate Cameras with a

Hardware.name, as it is used inoutput_filenameand to identify the network streamThree methods are required to be overwritten by all subclasses:

init_cam()- required - used bycam, instantiating the camera object so that it can be queried and configured_timestamp()- required - get a timestamp for the frame

The other methods are optional and depend on the particular camera:

capture_init()- optional - any required routine to prepare the camera after it is instantiated but before it begins to capture_process()- optional - the wrapper around a full acquisition cycle, including streaming, writing, and queueing frames_write_frame()- optional - how to write an individual frame to disk_write_deinit()- optional - any required routine to finish writing to disk after acquisitioncapture_deinit()- optional - any required routine to stop acquisition but not release the camera instance.

- Variables

frame (tuple) – The current captured frame as a tuple (timestamp, frame).

shape (tuple) – Shape of captured frames (height, width, channels)

blosc (bool) – If True (default), use blosc compression when

cam – The object used to interact with the camera

fps (int) – Framerate of video capture

timed (bool, int, float) – If False (default), camera captures indefinitely. If int or float, captures for this many seconds

q (Queue) – Queue that allows frames to be pulled by other objects

queue_size (int) – How many frames should be buffered in the queue.

initialized (threading.Event) – Called in

init_cam()to indicate the camera has been initializedstopping (threading.Event) – Called to signal that capturing should stop. when set, ends the threaded capture loop

capturing (threading.Event) – Set when camera is actively capturing

streaming (threading.Event) – Set to indicate that the camera is streaming data over the network

writing (threading.Event) – Set to indicate that the camera is writing video locally

queueing (threading.Event) – Indicates whether frames are being put into

qindicating (threading.Event) – Set to indicate that capture progress is being indicated in stdout by

tqdm

- Parameters

fps

timed

crop (tuple) – (x, y of top left corner, width, height)

**kwargs

Attributes:

test documenting input

what are we anyway?

Camera object.

Filename given to video writer.

Methods:

capture([timed])Spawn a thread to begin capturing.

_capture()Threaded capture method started by

capture()._process()A full frame capture cycle.

stream([to, ip, port, min_size])Enable streaming frames on capture.

l_start(val)Begin capturing by calling

Camera.capture()l_stop(val)Stop capture by calling

Camera.release()write([output_filename, timestamps, blosc])Enable writing frames locally on capture

Put

frameinto the_write_q, optionally compressing it withblosc.pack_array()End the

Video_Writer.queue([queue_size])Enable stashing frames in a queue for a local consumer.

_grab()Capture a frame and timestamp.

_timestamp([frame])Generate a timestamp for each

_grab()init_cam()Method to initialize camera object

Optional: Prepare

camafter initialization, but before captureOptional: Return

camto an idle state after capturing, but before releasingstop()Stop capture by setting

stoppingrelease()Release resources held by Camera.

- input = True

test documenting input

- capture(timed=None)[source]

Spawn a thread to begin capturing.

- Parameters

timed (None, int, float) – if None, record according to

timed(default). If numeric, record fortimedseconds.

- _capture()[source]

Threaded capture method started by

capture().Captures until

stoppingis set.Calls capture methods, in order:

capture_init()- any required routine to prepare the camera after it is instantiated but before it begins to capture_process()- the wrapper around a full acquisition cycle, including streaming, writing, and queueing frames_timestamp()- get a timestamp for the frame_write_frame()- how to write an individual frame to disk_write_deinit()- any required routine to finish writing to disk after acquisitioncapture_deinit()- any required routine to stop acquisition but not release the camera instance.

- _process()[source]

A full frame capture cycle.

_grab`s the :attr:().frame`, then handles streaming, writing, queueing, and indicating according tostream(),write(),queue(), andindicating, respectively.

- stream(to='T', ip=None, port=None, min_size=5, **kwargs)[source]

Enable streaming frames on capture.

Spawns a

Net_NodewithHardware.init_networking(), and creates a streaming queue withNet_Node.get_stream()according to args.Sets

Camera.streaming- Parameters

to (str) – ID of the recipient. Default ‘T’ for Terminal.

ip (str) – IP of recipient. If None (default), ‘localhost’. If None and

tois ‘T’,prefs.get('TERMINALIP')port (int, str) – Port of recipient socket. If None (default),

prefs.get('MSGPORT'). If None andtois ‘T’,prefs.get('TERMINALPORT').min_size (int) – Number of frames to collect before sending (default: 5). use 1 to send frames as soon as they are available, sacrificing the efficiency from compressing multiple frames together

**kwargs – passed to

Hardware.init_networking()and thus toNet_Node

- l_start(val)[source]

Begin capturing by calling

Camera.capture()- Parameters

val – unused

- l_stop(val)[source]

Stop capture by calling

Camera.release()- Parameters

val – unused

- write(output_filename=None, timestamps=True, blosc=True)[source]

Enable writing frames locally on capture

Spawns a

Video_Writerto encode video, setswriting- Parameters

output_filename (str) – path and filename of the output video. extension should be

.mp4, as videos are encoded with libx264 by default.timestamps (bool) – if True, (timestamp, frame) tuples will be put in the

_write_q. if False, timestamps will be generated byVideo_Writer(not recommended at all).blosc (bool) – if true, compress frames with

blosc.pack_array()before putting in_write_q.

- _write_frame()[source]

Put

frameinto the_write_q, optionally compressing it withblosc.pack_array()

- _write_deinit()[source]

End the

Video_Writer.Blocks until the

_write_qis empty, holding the release of the object.

- queue(queue_size=128)[source]

Enable stashing frames in a queue for a local consumer.

Other objects can get frames as they are acquired from

q- Parameters

queue_size (int) – max number of frames that can be held in

q

- property cam

Camera object.

If

_camhasn’t been initialized yet, useinit_cam()to do so- Returns

Camera object, different for each camera.

- property output_filename

Filename given to video writer.

If explicitly set, returns as expected.

If None, or path already exists while the camera isn’t capturing, a new filename is generated in the user directory.

- Returns

(str)

_output_filename

- _grab()[source]

Capture a frame and timestamp.

Method must be overridden by subclass

- Returns

- (str,

numpy.ndarray) Tuple of isoformatted (str) or numeric timestamp returned by_timestamp(), and captured frame

- (str,

- _timestamp(frame=None)[source]

Generate a timestamp for each

_grab()Must be overridden by subclass

- Parameters

frame – If needed by camera subclass, pass the frame or image object to get timestamp

- Returns

(str, int, float) Either an isoformatted (str) or numeric timestamp

- init_cam()[source]

Method to initialize camera object

Must be overridden by camera subclass

- Returns

camera object

- class PiCamera(camera_idx: int = 0, sensor_mode: int = 0, resolution: Tuple[int, int] = (1280, 720), fps: int = 30, format: str = 'rgb', *args, **kwargs)[source]

Bases:

autopilot.hardware.cameras.CameraInterface to the Raspberry Pi Camera Module via picamera

Parameters of the

picamera.PiCameraclass can be set after initialization by modifying thePiCamera.camattribute, egPiCamera().cam.exposure_mode = 'fixedfps'– see thepicamera.PiCameradocumentation for full documentation.Note that some parameters, like resolution, can’t be changed after starting

capture().The Camera Module is a slippery little thing, and

fpsandresolutionare just requests to the camera, and aren’t necessarily followed with 100% fidelity. The possible framerates and resolutions are determined by thesensor_modeparameter, which by default tries to guess the best sensor mode based on the fps and resolution. See the Sensor Modes documentation for more details.This wrapper uses a subclass,

PiCamera.PiCamera_Writerto capture frames decoded by the gpu directly from the preallocated buffer object. Currently the restoration from the buffer assumes that RGB, or generallyshape[2] == 3, images are being captured. See this stackexchange post by Dave Jones, author of the picamera module, for a strategy for capturing grayscale images quickly.This class also currently uses the default

Video_Writerobject, but it could be more performant to use thepicamera.PiCamera.start_recording()method’s built-in ability to record video to a file — try it out!Todo

Currently timestamps are constructed with

datetime.datetime.now.isoformat(), which is not altogether accurate. Timestamps should be gotten from theframeattribute, which depends on theclock_modeReferences

https://blog.robertelder.org/recording-660-fps-on-raspberry-pi-camera/

Fast capture from the author of picamera - https://raspberrypi.stackexchange.com/a/58941/112948

More on fast capture and processing, see last example in section - https://picamera.readthedocs.io/en/release-1.12/recipes2.html#rapid-capture

- Parameters

camera_idx (int) – Index of picamera (default: 0, >=1 only supported on compute module)

sensor_mode (int) – Sensor mode, default 0 detects automatically from resolution and fps, note that sensor_mode will affect the available resolutions and framerates, see Sensor Modes for more information

resolution (tuple) – a tuple of (width, height) integers, but mind the note in the above documentation regarding the sensor_mode property and resolution

fps (int) – frames per second, but again mind the note on sensor_mode

format (str) – Format passed to :class`picamera.PiCamera.start_recording` one of

('rgb' (default), 'grayscale')The'grayscale'format uses the'yuv'format, and extracts the luminance channel*args () – passed to superclass

**kwargs () – passed to superclass

Attributes:

Sensor mode, default 0 detects automatically from resolution and fps, note that sensor_mode will affect the available resolutions and framerates, see Sensor Modes for more information.

A tuple of ints, (width, height).

Frames per second

Rotation of the captured image, derived from

Camera.rotate* 90.Methods:

init_cam()Initialize and return the

picamera.PiCameraobject.Spawn a

PiCamera.PiCamera_Writerobject toPiCamera._picam_writerandstart_recording()in the setformat_grab()Wait on the

grab_eventto be set, then clear it before returning the frame.stop_recording()andclose()the camera, releasing its resources.release()Release resources held by Camera.

Classes:

PiCamera_Writer(resolution[, format])Writer object for processing individual frames, see: https://raspberrypi.stackexchange.com/a/58941/112948

- property sensor_mode: int

Sensor mode, default 0 detects automatically from resolution and fps, note that sensor_mode will affect the available resolutions and framerates, see Sensor Modes for more information.

When set, if the camera has been initialized, will change the attribute in

PiCamera.cam- Returns

int

- property resolution: Tuple[int, int]

A tuple of ints, (width, height).

Resolution can’t be changed while the camera is capturing.

See Sensor Modes for more information re: how resolution relates to

picamera.PiCamera.sensor_mode- Returns

tuple of ints, (width, height)

- property fps: int

Frames per second

See Sensor Modes for more information re: how fps relates to

picamera.PiCamera.sensor_mode- Returns

int - fps

- property rotation: int

Rotation of the captured image, derived from

Camera.rotate* 90.Must be one of

(0, 90, 180, 270)Rotation can be changed during capture

- Returns

int - Current rotation

- init_cam() picamera.PiCamera[source]

Initialize and return the

picamera.PiCameraobject.Uses the stored

camera_idx,resolution,fps, andsensor_modeattributes on init.- Returns

- capture_init()[source]

Spawn a

PiCamera.PiCamera_Writerobject toPiCamera._picam_writerandstart_recording()in the setformat

- _grab() Tuple[str, numpy.ndarray][source]

Wait on the

grab_eventto be set, then clear it before returning the frame.- Returns

(timestamp, frame) tuple

- capture_deinit()[source]

stop_recording()andclose()the camera, releasing its resources.

- release()[source]

Release resources held by Camera.

Must be overridden by subclass.

Does not raise exception in case some general camera release logic should be put here…

- class PiCamera_Writer(resolution: Tuple[int, int], format: str = 'rgb')[source]

Bases:

objectWriter object for processing individual frames, see: https://raspberrypi.stackexchange.com/a/58941/112948

- Parameters

resolution (tuple) – (width, height) tuple used when making numpy array from buffer

- Variables

grab_event (

threading.Event) – Event set whenever a new frame is captured, cleared by the parent class when the frame is consumed.frame (

numpy.ndarray) – Captured frametimestamp (str) – Isoformatted timestamp of time of capture.

Methods:

write(buf)Reconstutute the buffer into a numpy array in

PiCamera_Writer.frameand make a timestamp inPiCamera_Writer.timestamp, then set thePiCamera_Writer.grab_event

- class Camera_CV(camera_idx=0, **kwargs)[source]

Bases:

autopilot.hardware.cameras.CameraCapture Video from a webcam with OpenCV

By default, OpenCV will select a suitable backend for the indicated camera. Some backends have difficulty operating multiple cameras at once, so the performance of this class will be variable depending on camera type.

Note

OpenCV must be installed to use this class! A Prebuilt opencv binary is available for the raspberry pi, but it doesn’t take advantage of some performance-enhancements available to OpenCV. Use

autopilot.setup.run_script opencvto compile OpenCV with these enhancements.If your camera isn’t working and you’re using v4l2, to print debugging information you can run:

# set the debug log level echo 3 > /sys/class/video4linux/videox/dev_debug # check logs dmesg

- Parameters

camera_idx (int) – The index of the desired camera

**kwargs – Passed to the

Camerametaclass.

- Variables

camera_idx (int) – The index of the desired camera

last_opencv_init (float) – See

OPENCV_LAST_INIT_TIMElast_init_lock (

threading.Lock) – Lock for settinglast_opencv_init

Attributes:

Attempts to get FPS with

cv2.CAP_PROP_FPS, uses 30fps as a defaultAttempts to get image shape from

cv2.CAP_PROP_FRAME_WIDTHandHEIGHT:returns: (width, height) :rtype: tuplecapture backend used by OpenCV for this camera

Device information from

v4l2-ctlMethods:

_grab()Reads a frame with

cam.read()_timestamp([frame])Attempts to get timestamp with

cv2.CAP_PROP_POS_MSEC.init_cam()Initializes OpenCV Camera

release()Release resources held by Camera.

- property fps

Attempts to get FPS with

cv2.CAP_PROP_FPS, uses 30fps as a default- Returns

framerate

- Return type

- property shape

Attempts to get image shape from

cv2.CAP_PROP_FRAME_WIDTHandHEIGHT:returns: (width, height) :rtype: tuple

- _timestamp(frame=None)[source]

Attempts to get timestamp with

cv2.CAP_PROP_POS_MSEC. Frame does not need to be passed to this method, as timestamps are retrieved fromcamTodo

Convert this float timestamp to an isoformatted system timestamp

- Returns

milliseconds since capture start

- Return type

- property backend

capture backend used by OpenCV for this camera

- Returns

name of capture backend used by OpenCV for this camera

- Return type

- init_cam()[source]

Initializes OpenCV Camera

To avoid overlapping resource allocation requests, checks the last time any

Camera_CVobject was instantiated and makes sure it has been at least 2 seconds since then.- Returns

camera object

- Return type

cv2.VideoCapture

- class Camera_Spinnaker(serial=None, camera_idx=None, **kwargs)[source]

Bases:

autopilot.hardware.cameras.CameraCapture video from a FLIR brand camera with the Spinnaker SDK.

- Parameters

serial (str) – Serial number of desired camera

camera_idx (int) – If no serial provided, select camera by index. Using

serialis HIGHLY RECOMMENDED.**kwargs – passed to

Camerametaclass

Note

PySpin and the Spinnaker SDK must be installed to use this class. Please use the

install_pyspin.shscript insetupSee the documentation for the Spinnaker SDK and PySpin here:

https://www.flir.com/products/spinnaker-sdk/

- Variables

serial (str) – Serial number of desired camera

camera_idx (int) – If no serial provided, select camera by index. Using

serialis HIGHLY RECOMMENDED.system (

PySpin.System) – The PySpin System objectcam_list (

PySpin.CameraList) – The list of PySpin Cameras available to the systemnmap – A reference to the nodemap from the GenICam XML description of the device

base_path (str) –

The directory and base filename that images will be written to if object is

writing. eg:base_path = ‘/home/user/capture_directory/capture_’ image_path = base_path + ‘image1.png’

img_opts (

PySpin.PNGOption) – Options for saving .png images, made bywrite()

Attributes:

Conversion from data types to pointer types

Conversion from data types to human-readable names

bool, 'write':bool} descriptor

Camera Binning.

Set Exposure of camera

Acquisition Framerate

Set camera to lead or follow hardware triggers

Image acquisition mode

All device attributes that are currently readable with

get()All device attributes that are currently writeable wth

set()Get all information about the camera

Methods:

init_cam()Initialize the Spinnaker Camera

Prepare the camera for acquisition

De-initializes the camera after acquisition

_process()Modification of the

Camera._process()method for Spinnaker cameras_grab()Get next timestamp and PySpin Image

_timestamp([frame])Get the timestamp from the passed image

write([output_filename, timestamps, blosc])Sets camera to save acquired images to a directory for later encoding.

Write frame to

base_path+ timestamp + '.png' withPySpin.Image.Save()After capture, write images in

base_pathto video withDirectory_Writerget(attr)Get a camera attribute.

set(attr, val)Set a camera attribute

list_options(name)List the possible values of a camera attribute.

release()Release all PySpin objects and wait on writer, if still active.

- ATTR_TYPES = {}

Conversion from data types to pointer types

- ATTR_TYPE_NAMES = {}

Conversion from data types to human-readable names

- RW_MODES = {}

bool, ‘write’:bool} descriptor

- Type

Conversion from read/write mode to {‘read’

- init_cam()[source]

Initialize the Spinnaker Camera

Initializes the camera, system, cam_list, node map, and the camera methods and attributes used by

get()andset()- Returns

The Spinnaker camera object

- Return type

PySpin.Camera

- capture_init()[source]

Prepare the camera for acquisition

calls the camera’s

BeginAcquisitionmethod and populateshape

- _process()[source]

Modification of the

Camera._process()method for Spinnaker camerasBecause the objects returned from the

_grab()method are image pointers rather than :class:`numpy.ndarray`s, they need to be handled differently.More details on the differences are given in the

_write_frame(),

- _timestamp(frame=None)[source]

Get the timestamp from the passed image

- Parameters

frame (

PySpin.Image) – Currently grabbed image- Returns

PySpin timestamp

- Return type

- write(output_filename=None, timestamps=True, blosc=True)[source]

Sets camera to save acquired images to a directory for later encoding.

For performance, rather than encoding during acquisition, save each image as a (lossless) .png image in a directory generated by

output_filename.After capturing is complete, a

Directory_Writerencodes the images to an x264 encoded .mp4 video.- Parameters

output_filename (str) – Directory to write images to. If None (default), generated by

output_filenametimestamps (bool) – Not used, timestamps are always appended to filenames.

blosc (bool) – Not used, images are directly saved.

- _write_deinit()[source]

After capture, write images in

base_pathto video withDirectory_WriterCamera object will remain open until writer has finished.

- property bin

Camera Binning.

Attempts to bin on-device, and use averaging if possible. If averaging not available, uses summation.

- Parameters

tuple – tuple of integers, (Horizontal, Vertical binning)

- Returns

(Horizontal, Vertical binning)

- Return type

- property exposure

Set Exposure of camera

Can be set with

'auto'- automatic exposure control. note that this will limit frameratefloatfrom 0-1 - exposure duration proportional to fps. eg. if fps = 10, setting exposure = 0.5 means exposure will be set as 50msfloatorint>1 - absolute exposure time in microseconds

- property fps

Acquisition Framerate

Set with integer. If set with None, ignored (superclass sets FPS to None on init)

- Returns

from

cam.AcquisitionFrameRate.GetValue()- Return type

- property frame_trigger

Set camera to lead or follow hardware triggers

If

'lead', Camera will send TTL pulses from Line 2.If

'follow', Camera will follow triggers from Line 3.

- property acquisition_mode

Image acquisition mode

One of

'continuous'- continuously acquire frame camera'single'- acquire a single frame'multi'- acquire a finite number of frames.

Warning

Only

'continuous'has been tested.

- property readable_attributes

All device attributes that are currently readable with

get()- Returns

A dictionary of attributes that are readable and their current values

- Return type

- property writable_attributes

All device attributes that are currently writeable wth

set()- Returns

A dictionary of attributes that are writeable and their current values

- Return type

- get(attr)[source]

Get a camera attribute.

Any value in

readable_attributescan be read. Attempts to get numeric values with.GetValue, otherwise gets a string with.ToString, so be cautious with types.If

attris a method (ie. in._camera_methods, execute the method and return the value

- set(attr, val)[source]

Set a camera attribute

Any value in

writeable_attributescan be set. If attribute has a.SetValuemethod, (ie. accepts numeric values), attempt to use it, otherwise use.FromString.- Parameters

attr (str) – Name of attribute to be set

val (str, int, float) – Value to set attribute

- list_options(name)[source]

List the possible values of a camera attribute.

- Parameters

name (str) – name of attribute to query

- Returns

Dictionary with {available options: descriptions}

- Return type

- property device_info

Get all information about the camera

Note that this is distinct from camera attributes like fps, instead this is information like serial number, version, firmware revision, etc.

- Returns

{feature name: feature value}

- Return type

- class Video_Writer(q, path, fps=None, timestamps=True, blosc=True)[source]

Bases:

multiprocessing.context.ProcessEncode frames as they are acquired in a separate process.

Must call

start()after initialization to begin encoding.Encoding continues until ‘END’ is put in

q.Timestamps are saved in a .csv file with the same path as the video.

- Parameters

q (

Queue) – Queue into which frames will be dumpedpath (str) – output path of video

fps (int) – framerate of output video

timestamps (bool) – if True (default), input will be of form (timestamp, frame). if False, input will just be frames and timestamps will be generated as the frame is encoded (not recommended)

blosc (bool) – if True, frames in the

qwill be compresed with blosc. if False, uncompressed

- Variables

timestamps (list) – Timestamps for frames, written to .csv on completion of encoding

Methods:

run()Open a

skvideo.io.FFmpegWriterand begin processing frames fromq- run()[source]

Open a

skvideo.io.FFmpegWriterand begin processing frames fromqShould not be called by itself, overwrites the

multiprocessing.Process.run()method, so should callVideo_Writer.start()Continue encoding until ‘END’ put in queue.